10 May Sweetness and Sin

Imagine that the natural sciences were to suffer the effects of a catastrophe. A series of environmental disasters are blamed by the general public on the scientists. Widespread riots occur, laboratories are burnt down, physicists are lynched, books and instruments are destroyed. Finally a Know-Nothing political movement takes power and successfully abolishes science teaching in schools and universities, imprisoning and executing the remaining scientists. Later still there is a reaction against this destructive movement and enlightened people seek to revive science, although they have largely forgotten what it was. But all that they possess are fragments: a knowledge of experiments detached from any knowledge of the theoretical context which gave them significance; parts of theories unrelated either to the other bits and pieces of theory which they possess or to experiment; instruments whose use has been forgotten; half-chapters from books, single pages from articles, not always fully legible because torn and charred. Nonetheless all these fragments are reembodied in a set of practices which go under the revived names of physics, chemistry and biology. Adults argue with each other about the respective merits of relativity theory, evolutionary theory and phlogiston theory, although they possess only a very partial knowledge of each. Children learn by heart the surviving portions of the periodic table and recite as incantations some of the theorems of Euclid. Nobody, or almost nobody, realizes that what they are doing is not natural science in any proper sense at all. For everything that they do and say conforms to certain canons of consistency and coherence and those contexts which would be needed to make sense of what they are doing have been lost, perhaps irretrievably.

In such a culture men would use expressions such as ‘neutrino’, ‘mass’, ‘specific gravity’, ‘atomic weight’ in systematic and often interrelated ways which would resemble in lesser or greater degrees the ways in which such expressions had been used in earlier times before scientific knowledge had been so largely lost. But many of the beliefs presupposed by the use of these expressions would have been lost and there would appear to be an element of arbitrariness and even of choice in their application which would appear very surprising to us. What would appear to be rival and competing premises for which no further argument could be given would abound. Subjectivist theories of science would appear and would be criticized by those who held that the notion of truth embodied in what they took to be science was incompatible with subjectivism….

What is the point of constructing this imaginary world inhabited by fictitious pseudo-scientists and real, genuine philosophy? The hypothesis which I wish to advance is that in the actual world which we inhabit the language of morality is in the same state of grave disorder as the language of natural science in the imaginary world which I described. What we possess, if this view is true, are the fragments of a conceptual scheme, parts which now lack those contexts from which their significance derived. We possess indeed simulacra of morality, we continue to use many of the key expressions. But we have — very largely, if not entirely — lost our comprehension, both theoretical and practical, of morality.

Alastair MacIntyre, After Virtue: A Study in Moral Theory, 1981.

A few weeks ago, Geoffrey Hinton – the man known as the “Godfather of AI” – left his job at Google so that he could, as he puts it, “talk about the dangers of AI without considering how this impacts” his now former employer. Dangers, you say? Do tell:

Geoffrey Hinton was an artificial intelligence pioneer. In 2012, Dr. Hinton and two of his graduate students at the University of Toronto created technology that became the intellectual foundation for the A.I. systems that the tech industry’s biggest companies believe is a key to their future.

On Monday, however, he officially joined a growing chorus of critics who say those companies are racing toward danger with their aggressive campaign to create products based on generative artificial intelligence, the technology that powers popular chatbots like ChatGPT.

Dr. Hinton said he has quit his job at Google, where he has worked for more than a decade and became one of the most respected voices in the field, so he can freely speak out about the risks of A.I. A part of him, he said, now regrets his life’s work.

“I console myself with the normal excuse: If I hadn’t done it, somebody else would have,” Dr. Hinton said during a lengthy interview last week in the dining room of his home in Toronto, a short walk from where he and his students made their breakthrough.

Dr. Hinton’s journey from A.I. groundbreaker to doomsayer marks a remarkable moment for the technology industry at perhaps its most important inflection point in decades.

This is indeed remarkable – although not for the reasons that the New York Times thinks it is. It is remarkable, rather, that anyone thinks it’s remarkable. Or, to put it less obliquely: none of this should surprise anyone. And the fact that the Times considers it “remarkable” is indicative of appalling historical ignorance. Indeed, “Dr. Hinton’s journey from A.I. groundbreaker to doomsayer” is typical in a world devoid of historical knowledge and of any genuine understanding of morality and ethics.

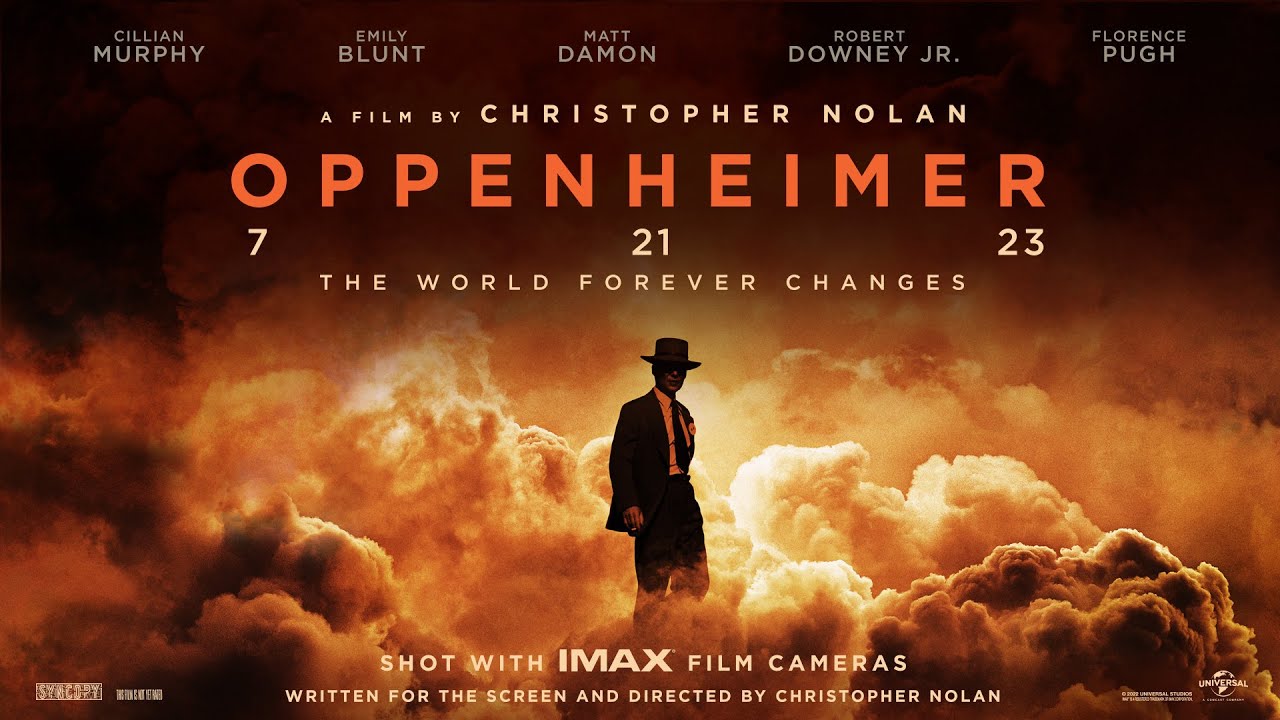

It is interesting – and ironic – that at the same time that Dr. Hinton has been decrying the destructive potential of his creation, various social media outlets have been flooded with the new trailer for and commentary about the movie due out in July from the famed filmmaker Christopher Nolan: “Oppenheimer.”

We hate to judge a movie by its trailer – because, obviously, we might be 100% wrong about everything. Nevertheless, the trailer for Oppenheimer makes it appear as if the famed physicist, J. Robert Oppenheimer, was, all along, a reluctant and begrudging participant in the development of the atomic bomb. This is simply untrue. Oppenheimer initially operated by a code that we have dubbed (fittingly enough) “The Oppenheimer Principle.” He stated, openly, that he and his fellow researchers operated under the guiding “ethical” belief that, “When you see something that is technically sweet, you go ahead and do it and you argue about what to do about it only after you have had your technical success.”

Obviously – and to his credit – Oppenheimer came to regret that sentiment and the work that it produced. As fate would have it, he was fortunate in a way that most other scientific pioneers will never be. He saw, in an incredibly graphic and poignant way, just what that attitude toward ethics in science produced. He put it this way, in a speech he gave in November 1947 at MIT:

Despite the vision and farseeing wisdom of our wartime heads of state, the physicists have felt the peculiarly intimate responsibility for suggesting, for supporting, and in the end, in large measure, for achieving the realization of atomic weapons. Nor can we forget that these weapons as they were in fact used dramatized so mercilessly the inhumanity and evil of modern war. In some sort of crude sense which no vulgarity, no humor, no overstatement can quite extinguish, the physicists have known sin; and this is a knowledge which they cannot lose.

Oppenheimer was lucky in another way as well. While he acknowledged his own moral failings, he also knew, as he intimates above, that the ultimate moral responsibility for the use of his work was borne by the “wartime heads of state,” in this specific case, President Truman. And as Kai Bird and Martin J. Sherwin noted in their classic tome American Prometheus, Truman too understood this responsibility:

At 10:30 a.m. on October 25, 1945, Oppenheimer was ushered into the Oval Office. President Truman was naturally curious to meet the celebrated physicist, whom he knew by reputation to be an eloquent and charismatic figure … Oppenheimer seemed maddeningly tentative, obscure—and cheerless. Finally, sensing that the president was not comprehending the deadly urgency of his message, Oppenheimer nervously wrung his hands and uttered another of those regrettable remarks that he characteristically made under pressure. “Mr. President,” he said quietly, “I feel I have blood on my hands.”

The comment angered Truman. He later informed David Lilienthal, “I told him the blood was on my hands—to let me worry about that.” … Afterwards, the President was heard to mutter, “Blood on his hand, dammit, he hasn’t half as much blood on his hands as I have. You just don’t go around belly-aching about it.” He later told Dean Acheson, “I don’t want to see that son-of-a-bitch in this office ever again.”

Geoffrey Hinton is not as fortunate as was Oppenheimer. He works for Sundar Pichai, who is no Harry Truman. As the Times article above notes, that’s not so great:

Until last year, he said, Google acted as a “proper steward” for the technology, careful not to release something that might cause harm. But now that Microsoft has augmented its Bing search engine with a chatbot — challenging Google’s core business — Google is racing to deploy the same kind of technology. The tech giants are locked in a competition that might be impossible to stop, Dr. Hinton said.

We feel for Dr. Hinton. Like Oppenheimer, he believes that he has “known sin,” although “sin” is clearly a subjective term. As Alasdair MacIntyre notes in the quote at the top of this piece, no one today has any genuine conception of what morality means or, by extension, what sin is. We know the language, but we don’t know the process. We are lost and have no way of figuring out how to proceed.

Did Dr. Hinton do what he did simply because “it was sweet?” And if he did, does that mean he sinned? Is that conclusion mitigated by the fact that if he didn’t do it, someone else would have?

We – as a civilization – have no idea and remarkably few guideposts by which to answer such questions. And given that the “sweetness” of scientific discovery will continue to increase in unison with the danger it poses, this should be something to which we dedicate a great deal more thought and practice.

The buck will not always stop with someone willing to take the blame and accept the consequences.